I sometimes think that news is a bit like food. The Economist and The Atlantic are kale. Cable news is McDonald’s. The New York Times and Washington Post are Michelin star restaurants run by eccentric chefs who might be going insane; sometimes they’re great, sometimes you find a human finger in your risotto and think “what the fuck is going on here?” Twitter and Facebook are garbage bags full of Sour Patch Kids and whipped cream.

I’d be lying if I said that I exclusively consume a healthy, balanced media diet. I spend plenty of time scooping big handfuls from that delicious trash bag of sugary crap. Reading thoughtful pieces from quality sources is work; I have to force myself to do it. I’ll sometimes tell myself: “If you read this article about global cobalt shortage then you can reward yourself with the article you want to read: 15 Former Child Stars Who Work Shitty Jobs Now (#7 Is Also Fat!).”

Of course, since I accidentally ended up working in media, I think a lot about what people want to read and hear. The secret recipe, to be honest, is pretty simple: People want to hear stories that fit their worldview. Narratives are the coin of the realm. There are exceptions — one of the great things about Substack is that some truly outstanding newsletters are making independence their brand — but the big money isn’t in telling people stuff that’s new or that they might not want to hear. The big money is in telling people stories that confirm what they think they already know.

I recently came across a book that made me reflect on why narratives are so central to how we understand the world. The book was A Thousand Brains: A New Theory of Intelligence by Jeff Hawkins. It was a very good book, though I did not have fun reading it. It was work; it didn’t give me the dopamine hit I would have gotten from, say, an article about how Game of Thrones went from being an all-time great show to all-time gigantic pile of shit. That’s a thing that I believe and would like to have told back to me for the millionth time. Which is exactly the type of phenomenon that Hawkins’ book might help explain.

It would be a disservice to Hawkins’ argument to try to summarize it in a few paragraphs. So, let’s get started on that disservice: Hawkins thinks that we have an “old brain” and a “new brain”. The “old brain” is everything that’s not the neocortex, so: The long thing, the lumpy bit, the part that looks like a big walnut, and the cauliflower-lookin’ thing (Hawkins is a bit more specific). The old brain governs basic survival, which basically means that when we encounter something, the old brain sends a signal that tells us whether to eat it or fuck it.

The “new brain” — the neocortex — makes up 70 percent of the human brain. It controls what we might call “higher function”, i.e. more elaborate ways of deciding what to eat and/or fuck. An interesting thing about the neocortex is that it’s more homogeneous than you might think; one part is similar to another part, which is similar to the part after that. In that way, it’s a lot like the Wes Anderson catalogue: Sample any bit, and you’re likely to find the same thing (specifically: a symmetrically-framed scene featuring a Wilson brother using old-timey technology and having existential angst about his dad).1

Because all parts of the neocortex seem similarly built, Hawkins and his team at the Numenta Institute for Brain Shit (“Numenta” for short) set out to find an elemental explanation for what that tissue does. The theory they developed is that the neocortex creates “reference frames” — basically models — for how the world works. So, if I’m about to encounter a basketball, my brain has a model for how a basketball looks, feels, and behaves. My brain quickly makes a series of predictions based on that model, and — assuming those predictions prove correct when I encounter the ball — sends appropriate instructions (specifically: “Pass it, Whitey — you can’t shoot for shit.”). If my brain’s predictions are not met — if the basketball, say, has the texture of Jell-O or the density of a neutron star — then suddenly it’s DEFCON 4 in my body: Something unexpected has happened, which represents possible danger and demands my attention. Hawkins calls this general theory of function the “Thousand Brains Theory”, presumably after the seminal cognitive scientist Archibald Thousandbrains.

Hawkins thinks that this theorized method of interaction — knowledge > prediction > information > reaction — applies to complex ideas as much as basic objects. Just as I have a mental model of how I might interact with a basketball, I can have a mental model of how I’m likely interact with, say, a “networking” event (I’m going to spend a week telling myself “you have to do this” and then bail after 20 minutes and decide “I’ll just be poor”). Anything that can be imagined can be modeled; I can have models for concepts like democracy, justice, or mathematics. Hawkins believes that to be highly-intelligent is to have a highly-accurate model of how the world works.

I have no idea if Hawkins’ theory is correct. My brain emphatically does not possess a model for assessing theories of cognition — that space has been devoted to commercial jingles from the ‘80s and famous breasts that I’ve seen in movies. But from my totally-ignorant perspective, his theory makes sense. It seems intuitive to me that intelligence might be a way for organisms to understand their surroundings, and that higher intelligence could represent a more sophisticated understanding of those surroundings. After all, a synonym for “dumb” is “simple”. A simple cognitive model is “berries are tasty”; a better model is “berries are tasty except for nightshade”; and a genius model is “berries are tasty except for nightshade and if you pretend that some berries are magic people will overpay for them based on virtually no evidence.”

If Hawkins is right, then I think his theory might tell us why narratives are so powerful in shaping how we see the world. The first relevant point would be that narratives closely mimic the way we take in information. We receive information through experience, not abstraction. We’re presently in a pandemic in which mountains of statistical evidence have been published showing the efficacy of vaccines, but to many people, that data has proved less persuasive than the tale of Nicki Minaj’s cousin’s friend’s massively swollen balls. Say what you will about the quality of the information, Minaj’s method of conveyance is more intuitive. There’s no precedent in primitive society for internalizing abstract concepts represented by data, but there is a precedent for thinking “WHOA, look at what happened to that dude’s nuts!” If you saw a member of your tribe’s nads swell to the size of grapefruits — or if you even heard about it — you’d avoid whatever you thought caused the swelling. The Tale Of The Jumbo Testes would probably greatly shape your mental model of how the world works.

Once a model is built, there’s not much reason to adjust it without a concrete incentive. And political engagement isn’t typically a highly-efficient method of achieving personal goals; in terms of delivering direct personal benefit, a crude model of the political world will probably work about as well as an elaborate one. Plus, since experiences shape our models of the world, whatever model a person develops is likely to be self-serving anyway. Which is to say: Once someone has a model of how the political world works, they probably won’t be motivated to expend effort making that model better. A person’s model of the political world is almost never like an athlete’s body, demanding constant maintenance and upkeep; it’s like an athlete’s body after he retires — you might as well let the thing go to shit because it doesn’t really matter.

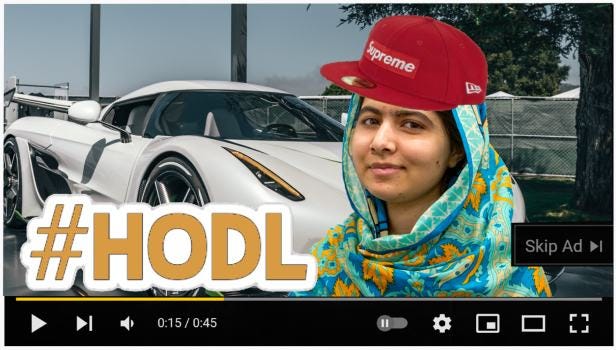

Since there’s rarely any real incentive to improve one’s model, there’s also no incentive to seek out better information. In fact, there’s a clear disincentive, because new information could be threatening. Returning to the basketball example: If I caught the ball and found it to be as dense as a neutron star, that would be a surprising and unexplained change to my environment. That’s pretty much the definition of a cause for alarm in the primal world. Similarly, if Malala Yousafzai got really into crypto, and started popping up in sketchy-looking YouTube ads pushing MalalaCoin, that would be surprising. It would create cognitive dissonance that would cause me stress. I’d have to either live with that stress or go through the hassle of creating a new mental model that can accommodate Malala in a flat-billed Supreme hat showing off her Lambo and telling me I “gotsa get sprung on this coin, kid.” I would have been better off without that data.

The clear incentive is for me to try to only encounter information that already fits my mental model. This is exponentially more possible today than it was 20 or 40 years ago. The splintering of media makes it easy to only encounter narratives that match what I already believe — Twitter and Facebook’s algorithms basically guarantee this. Back in the day, finding bespoke news was difficult; if you didn’t like what Walter Kronkite was saying, your only option was to flip over to NBC and have David Brinkley tell you pretty similar stuff. Kronkite and Brinkley might also have had some qualms about aggressively cultivating their coverage to reflect their audience’s beliefs. But today, cultivating your coverage to reflect your audience’s beliefs pretty much is the business model.

People in media have every incentive to simplify things. The less nuance they provide, the less likely they are to disrupt their viewers’ models of the world. Also, if mental models get more sophisticated with intelligence, then presenting a highly-complex, nuanced version of reality will greatly limit your audience. Better to omit the bits of information that your audience doesn’t like and double down on the bits that they do. The goals is to always confirm and never confront your audience’s beliefs; every story should be a parable in which you find a new way to tell your audience “you’re right”.

The result is news that’s essentially comfort food. It’s safe and reassuring; it flatters our intelligence and never makes us do the work of tearing down our model and building something new. Because — to return to where I began — obtaining the information to build a good model is hard. It sucks. I don’t want to read Jason Furman and the Financial Times and think about whether I’ve been too dovish on inflation. I want to watch that compilation of Orson Welles wine commercial outtakes for the millionth time because it supports my belief that some people we hail as geniuses are just weirdos. I posted the video below — you really should watch it, it’s at least as good as Citizen Kane. So please enjoy. But let’s all recognize: This is junk food. We should probably be adults and consume something healthy later on.

I like a lot of Wes Anderson’s work, but I still feel like this joke tracks.

I had a several big laughs reading this piece, so thanks for that. The first paragraph is gonna stay with me.

Great piece—so funny and well-written. Thank you!